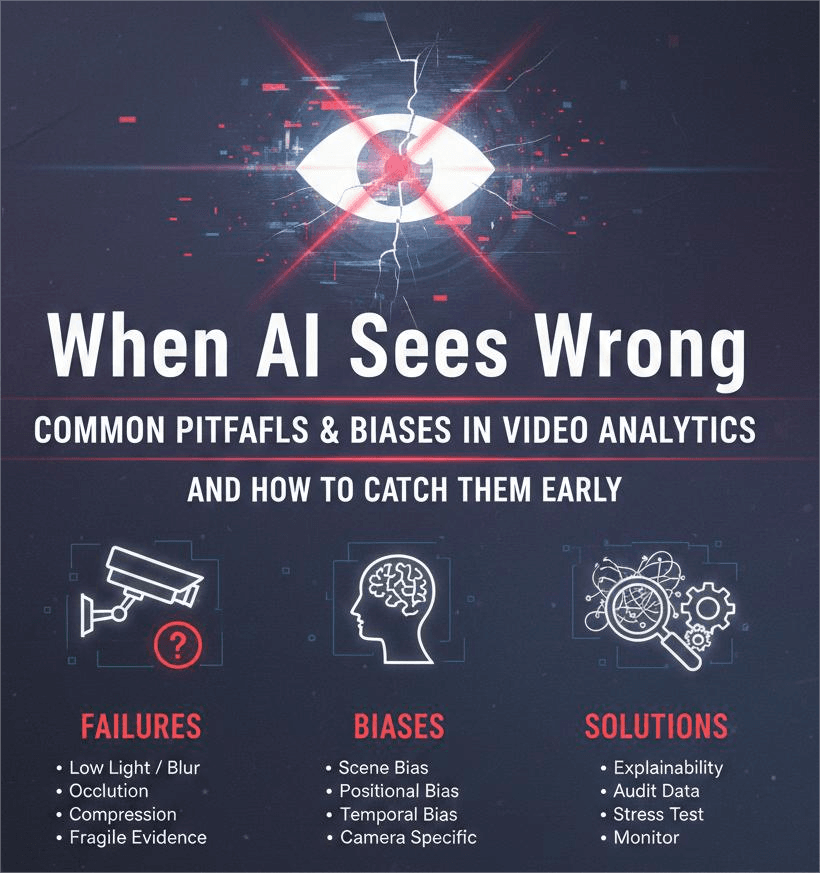

When AI Sees Wrong: Common Pitfalls & Biases in Video Analytics, and How to Catch Them Early

When AI Sees Wrong: Common Pitfalls & Biases in Video Analytics, and How to Catch Them Early

Video analytics has become one of the most relied-upon layers in modern AI systems. From security cameras to automated retail, medical triage to wildlife monitoring, video-based models constantly transform raw pixels into high-level understanding. But as powerful as they are, these models can, and often do, see the world incorrectly.

And when AI sees wrong, the consequences ripple outward: false alarms, undetected events, unfair outcomes, loss of trust, and in some domains, real harm.

In this post, we’ll explore where video analytics models typically fail, why these failures happen, and how explainability techniques can help you identify problems before they become system-breaking issues.

1. The Fragility of Visual Evidence

Video models rely heavily on patterns in the data they’ve been fed. But in real-world environments, conditions shift constantly: lighting, weather, angles, motion speeds, occlusion, compression artifacts, and even lens quality.

Common failure points:

- Low light or backlighting: Silhouettes often cause misclassification or missed detections.

- Motion blur: Fast-moving objects (hands, vehicles, wings, sports actions) confuse trackers and detectors.

- Camera compression & frame rate drops: Many “failures” are simply the result of degraded data.

- Occlusion: If half the object is hidden, the model may guess incorrectly, or not guess at all.

These issues are particularly painful in domains like retail analytics, behavior recognition, or wildlife tracking where tiny details matter.

The good news? Many of these problems can be uncovered early by visually inspecting the model’s decision process.

2. The Bias You Don’t See Until You Look Closely

Bias in video models isn't only demographic, though that’s a serious concern. Video datasets embed environmental and contextual biases too:

Types of bias that creep into video analytics:

- Scene bias: A model associates actions or objects with certain environments.

- Example: “running” is learned only from outdoor scenes → indoor running goes undetected.

- Positional bias: If most training samples show people centered in frame, edge-of-frame objects get ignored.

- Temporal bias: Models trained on short, trimmed clips struggle with long continuous footage.

- Camera-specific bias: Differences in resolution, color temperature, or lens distortions break generalization.

These biases cause a model to perform beautifully in lab tests and fail suddenly in production.

3. Why Traditional Evaluation Isn’t Enough

Metrics like mAP, precision, recall, F1, or IoU don’t capture the true performance landscape of video models. They average away the very worst failures, the ones that matter most.

Real-world performance issues often live in the outliers:

- a single missed suspicious behavior

- a single false gun detection

- one misinterpreted action in a hospital or factory

- or one incorrectly labeled animal species in a conservation project

Traditional metrics simply can’t reveal why the model made a mistake.

This is where explainability becomes essential.

4. Explainability Shows You Where the Model Is Looking (and Why It’s Wrong)

Explainable ML techniques help uncover the hidden processes behind a model’s predictions. For video analytics, they’re indispensable.

In one of our previous posts, “Teaching AI to Speak in Human Concepts” we explored how concept-based explainability can reveal which human-understandable features a model relies on. That same idea can expose video-related failure modes, such as:

- the model over-focusing on irrelevant background patterns

- reliance on clothing color instead of action cues

- confusing shadows or reflections for real objects

- identifying people by context instead of movement

- or using dangerous shortcuts like associating “dangerous events” with specific room types

Similarly, our article “Diffusion-Driven Counterfactuals for Video AI” touched on the power of counterfactuals, altering an input to see what would change the AI’s mind.

In video analytics, counterfactuals can show you:

- what minimal change flips a behavior classification

- how sensitive your model is to occlusion or lighting

- which frames carry the most decision weight

These tools reveal failures earlier and more clearly than metrics ever could.

5. Annotation Problems: The Invisible Root of Most Errors

Many AI failures originate not in the model, but in the data labeling process. Typical annotation errors in video datasets include:

- Inconsistent bounding boxes between frames

- Ambiguous action labels

- Misaligned timestamps

- Annotators interpreting events differently

- Missing labels for partially visible objects

These inconsistencies train the model to behave inconsistently. Worse: the model may learn to generalize the wrong features. Explainability tools can highlight annotation errors by revealing that:

- the model focuses on backgrounds that annotators consistently mislabeled,

- it fails on classes where annotation ambiguity was high,

- or it learned to detect objects based on accidental patterns in mislabeled frames.

If explainability shows “weird” attention; for example, the model highlights walls instead of people, that’s often an annotation issue, not a training issue.

6. Pitfall: Over-trusting Pretrained Models

Pretrained video models look attractive because they're inexpensive and easy to integrate. But they carry the biases and blind spots of the datasets they were trained on.

These models often fail when:

- your environment is not in their training distribution

- your actions differ from what they were trained to recognize

- your camera setup doesn’t match theirs

- your subjects behave or move differently

Pretrained models are helpful starting points, but without fine-tuning and explainability analysis, they can be ticking time bombs.

7. Closing the Gap: How to Make Your Video Analytics More Reliable

Here’s a practical roadmap for catching bias and failure modes before deployment:

✔️Use explainability from day one

Concept-based methods (like TCAV), saliency maps, counterfactuals, and frame-level importance tools reveal when your model is focusing on the wrong cues.

✔️Audit your annotations

Randomly sample, cross-check, and examine “borderline cases.” In video, small annotation inconsistencies multiply.

✔️Test on real deployment footage, not just your validation set

Real world = unpredictable. Validate on the actual behaviors, motion patterns, and lighting your system will face.

✔️Stress test the system

Try:

- low light

- camera shake

- occlusion

- compressed footage

- edge cases (rare actions)

✔️Monitor continuously

Video analytics models degrade as environments change. Real-world footage shifts constantly.

Conclusion

Video analytics is powerful, but it is also fragile. Models can misinterpret shadows, overfit to backgrounds, misread human behavior, or inherit bias from poorly constructed datasets. Traditional validation metrics can hide these problems, but explainability reveals them.

By combining:

- good annotation practices

- robust evaluation

- concept-level explainability (like TCAV)

- counterfactual inspection of model decisions

…you can build video AI systems that fail less, generalize better, and behave more transparently.

When AI sees wrong, it usually doesn’t hide it, you just need the right tools to see how and why it happened.